AI: inhuman after all?

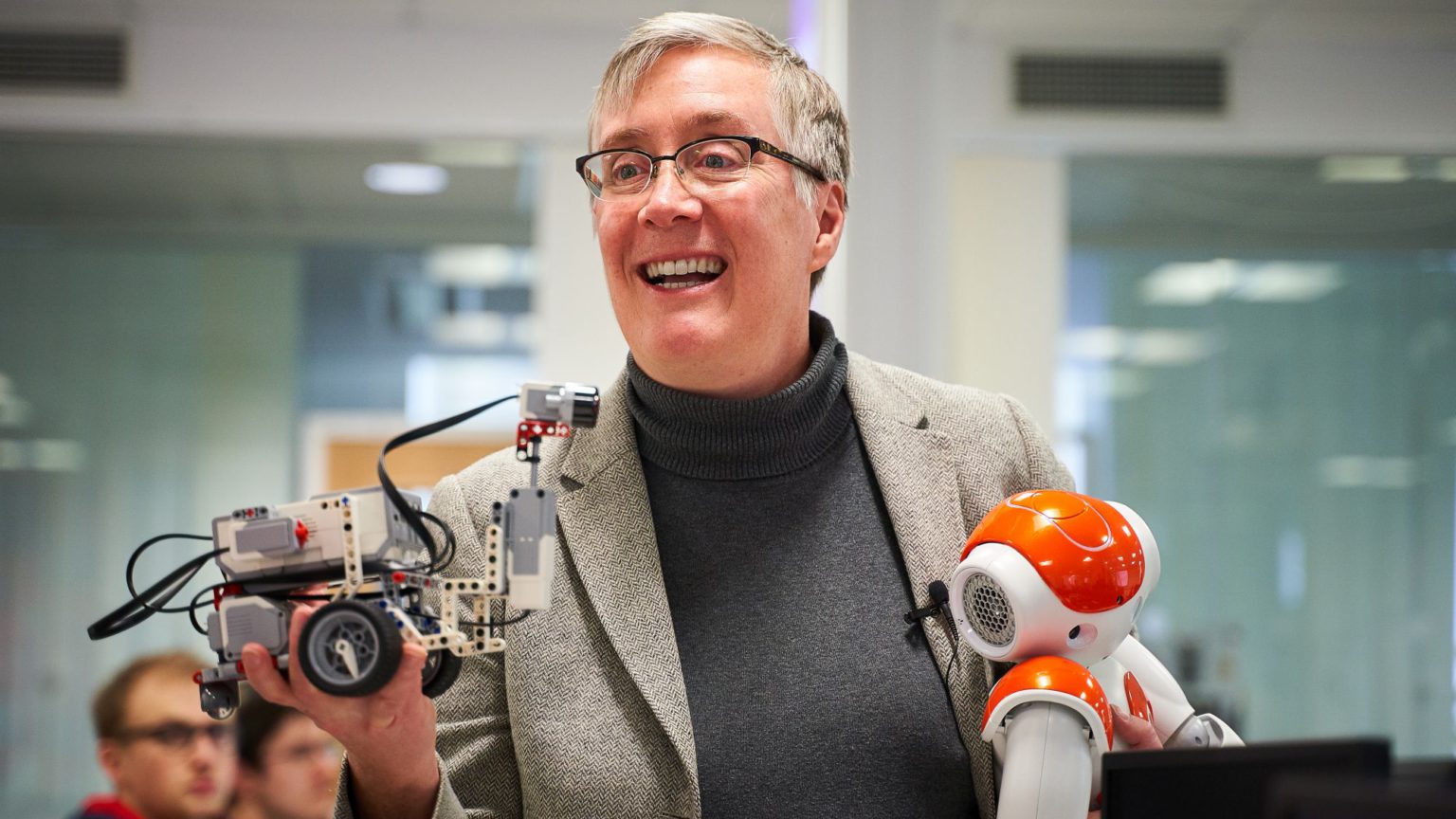

Joanna Bryson on what the world gets wrong about robots and AI.

Want unlimited, ad-free access? Become a spiked supporter.

Fears of a robot takeover have long been staples of great science fiction. Today, fears that robots will take over the world – or at the very least, your job – have become a staple in the news. In recent years, we’ve seen intelligent robots not only winning at chess, but also taking part in political debates and appearing to give evidence in parliaments. But are these fears justified? Is AI really anywhere close to developing human-like qualities? spiked caught up with Joanna Bryson, an associate professor at the University of Bath working on artificial intelligence, to find out.

spiked: Are robots going to take over the world?

Joanna Bryson: No. But it’s possible that people will take over the world using robots. Physical robots are not the biggest issue and we certainly shouldn’t attribute any agency to the robots themselves.

It’s arguable that some people are already taking over the world with artificial intelligence. AI is absolutely everywhere. If you think of AI as machines that are almost exactly like humans, then you’re never going to see it. There will never be AI that has the same motivations as humans. We are basically apes and no AI is going to share our ape intentions. AI is also unlikely to want to take over the world of its own volition – most humans don’t want to do that, either.

I define AI as intelligence that is an artefact. Intelligence is about moving from perception to action. It’s being able to recognise context, generate some information and possibly some agency to change something in the world. And that is everywhere. Our thermostats are intelligent in that way.

People have been arguing over these things for 50 years. From a policy perspective, it’s important to recognise that AI isn’t something that’s about to happen. It’s a thing that’s been happening increasingly ever since we’ve had digital technology. We don’t have to predict the future, we need to better understand the recent past.

spiked: How human can AI be?

Bryson: It’s sort of a backwards question. The question we should be asking is what is it about humans that we might propagate with AI. It’s clear that we can build machines that can replicate certain things humans can do. Certainly, there are aspects of what we are that we can describe to a machine and push out. But we need to recognise that it is us doing the work, otherwise people will be confused. For instance, when machines start to generate art, people might think the machine has some kind of deep inner life. But the inner life being reflected is a human inner life.

We produced a paper in 2017 which many people thought meant that AI was ‘sexist’ and ‘racist’. But what we really showed was that just from looking at words and how words are used, you get this enormous amount of human experience and you can feed some of that into AI. This particular AI was just a giant spreadsheet of words. There’s no real lived experience in AI, of course. But when humans talk we express our lived experience and so the reason AI looks human to us is because it is replaying the computations we’ve already done. When people see these things replicated by AI, they think there is something human.

AI actually works more like your dreams. Dreams don’t replicate your actual life, but your brain is cycling through things it already knows. Sometimes your dreams seem real, other times they are crazy and have no relation to normality. The same can be true with AI.

spiked: What do you make of attempts to create a legal status for AI or ‘rights for robots’?

Bryson: There are two different groups of people that want to do this. One group simply don’t understand that AI is not a part of themselves; they have these futuristic notions about AI. They are emotionally invested in AI. Usually, they don’t want to die and think they can extend their lives through it. They think of AI as if it were their offspring. And those people are advocating for AI to have rights. But they are not the real concern.

The real danger is with the people who own shell companies. Many of the problems we have in the world today come from people trying to evade the accountability of democracies and regulatory bodies. And AI would be the ultimate shell company. If AI is human-like, the argument goes, then you can use human justice on it. But that’s just false. You can’t even use human justice against shell companies. And there’s no way to build AI that can actually care about avoiding corruption or obeying the law. So it would be a complete mistake – a huge legal, moral and political hazard – to grant rights to AI.

spiked: Has a sci-fi view of AI made us worry about the wrong things?

Bryson: Science-fiction is a large genre so I don’t want to say that it’s a problem in itself. Certainly, that’s where people get the idea that the robots themselves are the problem. But we design the robots. It is our obligation to build robots that we don’t need to worry about!

Today I’m encouraged because people have started to worry about the right things. People have started realising that having all these cameras and microphones everywhere, for instance, can be a problem when all our data gets uploaded to the cloud and people access it. There is an insane amount of information we all have about each other. And even if you are very careful and you don’t have any cameras, microphones or even WiFi in your house, because so many other people have given away their data, now just your picture gives away a huge amount of information. So what we have to do is create legal defences so that people can still be distinct from each other. We have to recognise the ways in which power has shifted because of all the information about us that is out there.

Joanna Bryson was talking to Fraser Myers.

No paywall. No subscriptions

spiked is free for all

Donate today to keep us fighting

You’ve hit your monthly free article limit.

Support spiked and get unlimited access.

Support spiked and get unlimited access

spiked is funded by readers like you. Only 0.1% of regular readers currently support us. If just 1% did, we could grow our team and step up the fight for free speech and democracy.

Become a spiked supporter and enjoy unlimited, ad-free access, bonus content and exclusive events – while helping to keep independent journalism alive.

Monthly support makes the biggest difference. Thank you.

Comments

Want to join the conversation?

Only spiked supporters and patrons, who donate regularly to us, can comment on our articles.