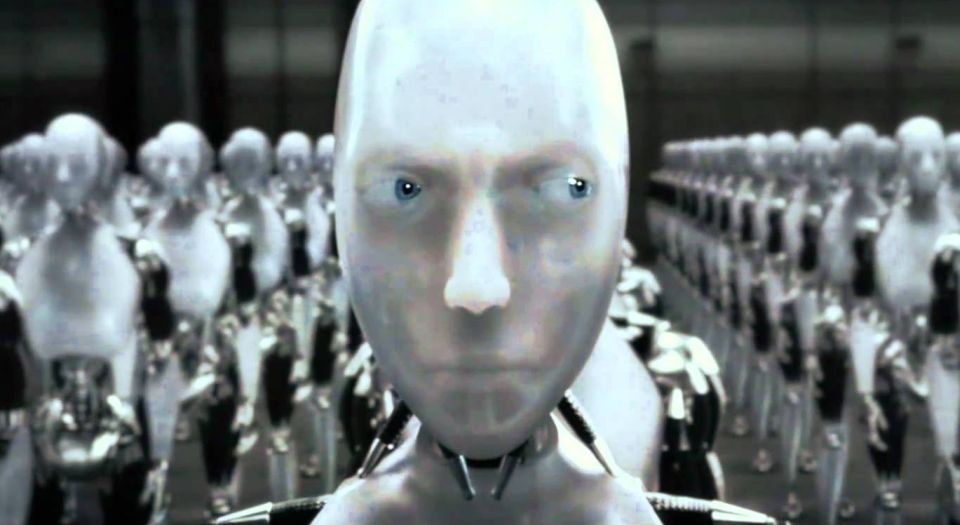

The robots are not taking over

Stephen Hawking may be scared, but AI promises to help, not hinder us.

Want unlimited, ad-free access? Become a spiked supporter.

Do you know the most dangerous threat facing mankind? Is it climate change? Ebola? ISIS? Or, to follow President Obama, is the threat on a par with Ebola and ISIS and going by the name of Vladimir Putin? After all, a columnist on The Sunday Times recently compounded the distaste by describing the Russian leader as Putinstein – in the process revealing how little she knew of Mary Shelley’s seminal anti-experimentation novel Frankenstein (1818), whose title refers to a doctor inventor, not his sad and vengeful robotic construct.

In fact, the biggest threat facing mankind is one that has in some ways only just been discovered: artificial intelligence (AI). The physicist Stephen Hawking has said that AI could become ‘a real danger’ in the ‘not-too-distant’ future. Hawking added that ‘the risk is that computers develop intelligence and take over. Humans, who are limited by slow biological evolution, couldn’t compete, and would be superseded.’

That Hawking himself is aided by computers in his speech is an irony. For the fact is that computers are likely to remain a help much more than a hindrance for many years to come. Sure, they might run amok; but that will be to do with bad or malevolent programming, not the arrival of true intelligence.

Like the IT boosters of Silicon Valley, Hawking invokes Moore’s Law. Yet it is one thing to note the increase in computer power over the years; it is quite another to confer on semi-conductors the power to think – and, in particular, the power to think in a social way, using the insights of others and the prevailing zeitgeist to form novel and creative conclusions. Computers are not ‘smart’, any more than cities are. They cannot form aesthetic, ethical, philosophical or political judgments, and never will. These are the special faculties of humankind, and no amount of electrons – or ‘digital democracy’, for that matter – can substitute for them.

In fact, the fear of AI is really a form of hatred for mankind. It marks a new level of estrangement from the things we make, and a rising note of nausea around innovation. That is why professor Nick Bostrom, director of the University of Oxford’s Future of Humanity Institute (which is distinct, please note, from Cambridge’s Centre for the Study of Existential Risk), says that it’s ‘non-obvious’ that more innovation would be better. Well, maybe; but it recently took me about 90 minutes to go by rail from London Paddington to Bostrom’s Oxford, so a bit more innovation in the form of high-speed trains would not go amiss in Britain today.

Hawking and Bostrom are not isolated individuals. In the middle of all today’s crazed euphoria about IT, complete with the taxi application Uber being valued at $41 billion, there is a profound and bilious feeling of apprehension about where IT might lead. Thus, when nothing like AI exists after decades of research in the field, a leading article in the Financial Times intones that pioneers in the field ‘must tread carefully’.

Yes, IBM’s Watson supercomputer recently won the gameshow Jeopardy!. But did it know it won? No – the viewers did, but the Giant Calculator did not. Just because a machine passes the Turing test, in that it looks intelligent, that doesn’t mean that it is intelligent.

Readers of spiked will understand that. But my smartphone, into which I have dictated this, won’t.

James Woudhuysen is editor of Big Potatoes: the London Manifesto for Innovation. Read his blog here.

You’ve hit your monthly free article limit.

Support spiked and get unlimited access.

Support spiked and get unlimited access

spiked is funded by readers like you. Only 0.1% of regular readers currently support us. If just 1% did, we could grow our team and step up the fight for free speech and democracy.

Become a spiked supporter and enjoy unlimited, ad-free access, bonus content and exclusive events – while helping to keep independent journalism alive.

Monthly support makes the biggest difference. Thank you.

Comments

Want to join the conversation?

Only spiked supporters and patrons, who donate regularly to us, can comment on our articles.